跃问YueWen Free 服务

支持高速流式输出、多轮对话、联网搜索、长文档解读、图像解析,零配置部署,多路token支持,自动清理会话痕迹。

与ChatGPT接口完全兼容。

以下八个free-api也值得关注:

Moonshot AI(Kimi.ai)接口转API kimi-free-api

阿里通义 (Qwen) 接口转API qwen-free-api

智谱AI (智谱清言) 接口转API glm-free-api

秘塔AI (Metaso) 接口转API metaso-free-api

讯飞星火(Spark)接口转API spark-free-api

MiniMax(海螺AI)接口转API hailuo-free-api

深度求索(DeepSeek)接口转API deepseek-free-api

聆心智能 (Emohaa) 接口转API emohaa-free-api

目录

免责声明

逆向API不稳定,建议前往阶跃星辰官方 https://platform.stepfun.com/ 付费使用API,以避免封禁风险。

本组织和个人不接受任何资金捐助和交易,此项目纯粹用于研究交流学习!

仅限自用,禁止对外提供服务或商用,避免对官方造成服务压力,否则风险自担!

仅限自用,禁止对外提供服务或商用,避免对官方造成服务压力,否则风险自担!

仅限自用,禁止对外提供服务或商用,避免对官方造成服务压力,否则风险自担!

在线体验

此链接仅供临时测试功能,不可长期使用,长期使用请自行部署。

https://udify.app/chat/RGqDVPHspgQgGSgf

效果示例

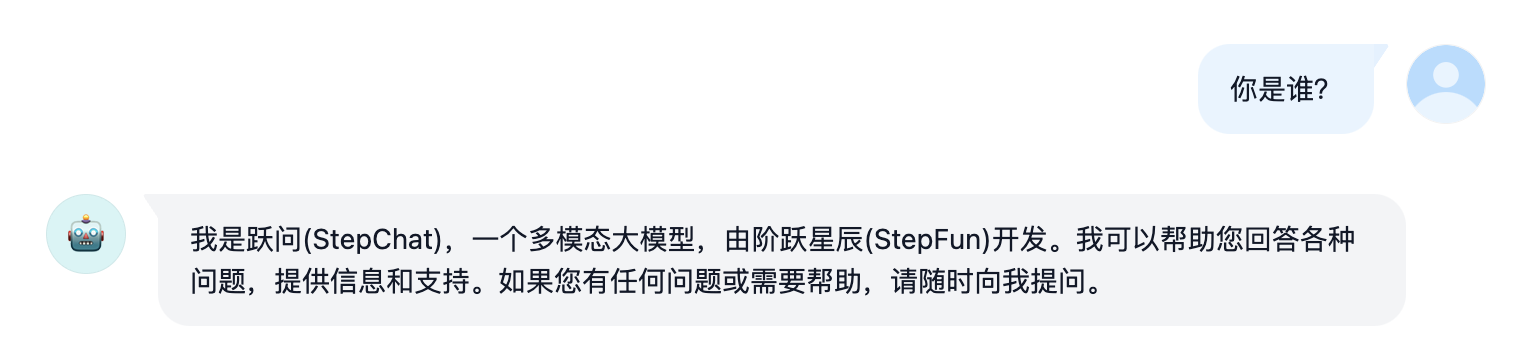

验明正身Demo

多轮对话Demo

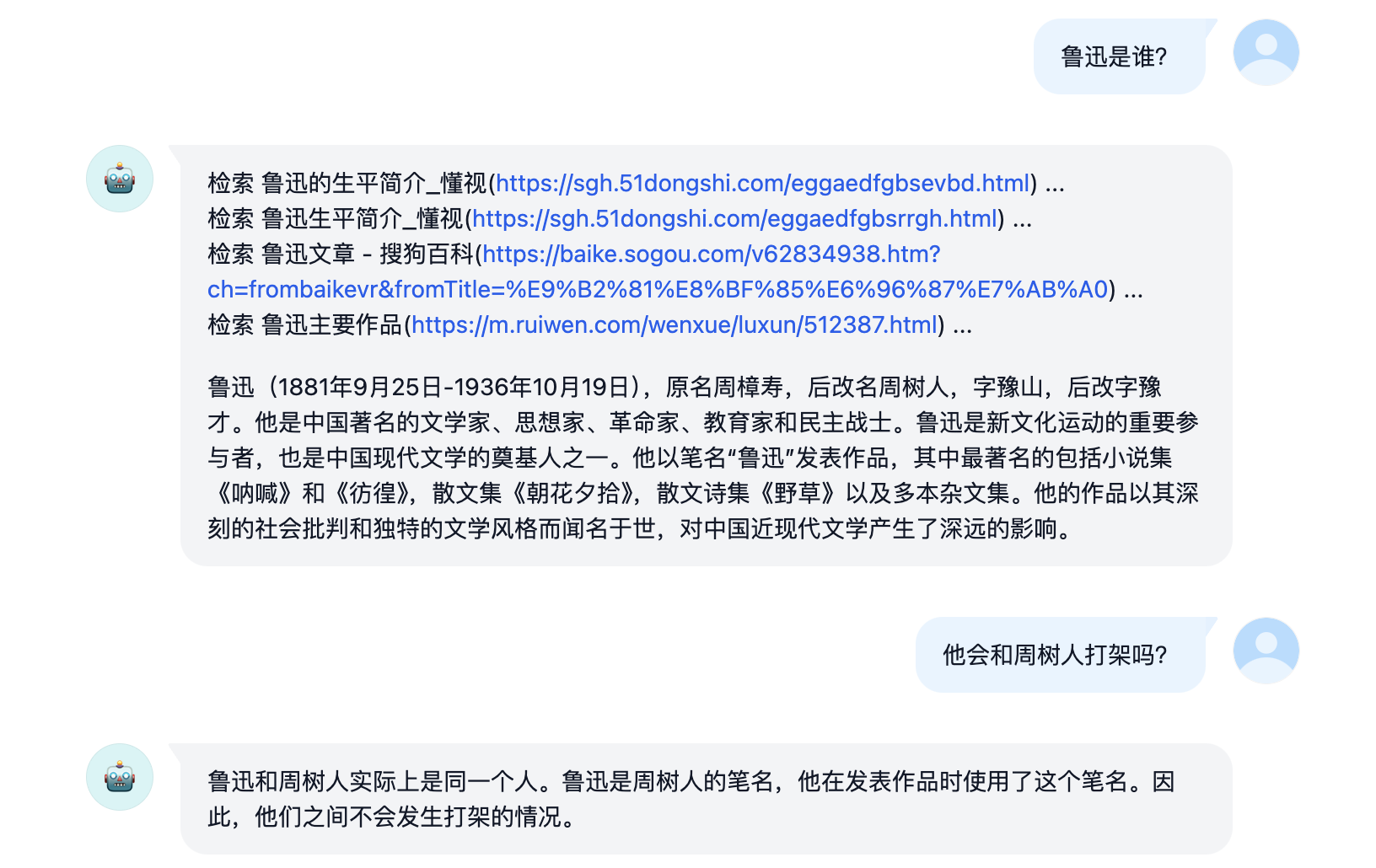

联网搜索Demo

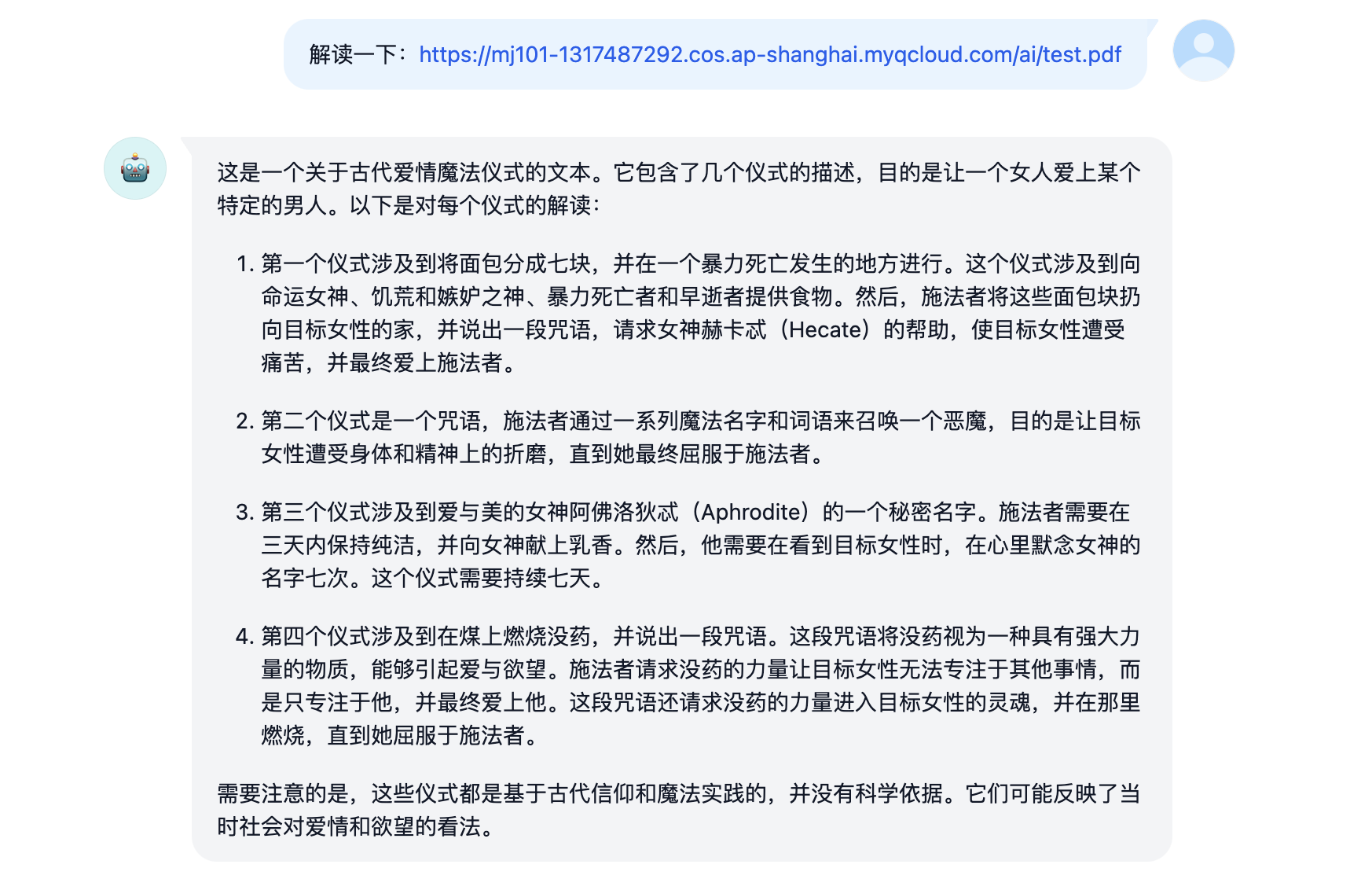

长文档解读Demo

图像解析Demo

接入准备

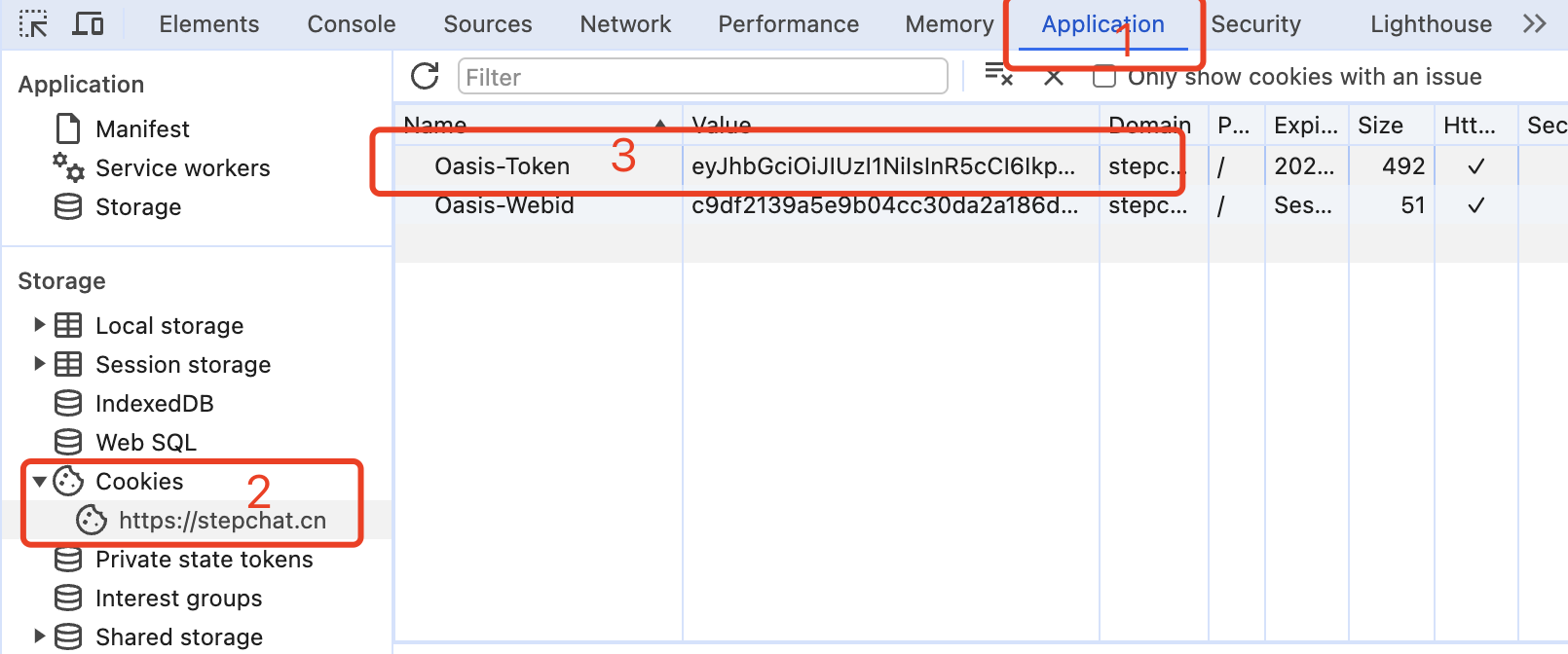

从 yuewen.cn 获取deviceId和Oasis-Token

进入StepChat随便发起一个对话,然后按F12打开开发者工具。

- 从Application > LocalStorage中找到

deviceId的值(去除双引号),例如:267bcc81a01c2032a11a3fc6ec3e372c380eb9d1

- 从Application > Cookies中找到

Oasis-Token的值,例如:eyJhbGciOiJIUzI1NiIsInR5cCI6IkpXVCJ9...

- 将

deviceId和Oasis-Token用@拼接为Token,这将作为Authorization的Bearer Token值:Authorization: Bearer 267bcc81a01c2032a11a3fc6ec3e372c380eb9d1@eyJhbGciOiJIUzI1NiIsInR5cCI6IkpXVCJ9...

多账号接入

你可以提供多个账号的refresh_token并用,拼接:

Authorization: Bearer TOKEN1,TOKEN2,TOKEN3

每次请求服务会从中随机选择一个。

Docker部署

Please prepare a server with a public IP address and open port 8000.

Pull the image and start the service

docker run -it -d --init --name step-free-api -p 8000:8000 -e TZ=Asia/Shanghai vinlic/step-free-api:latest

View real-time service logs

docker logs -f step-free-api

Restart the service

docker restart step-free-api

Stop the service

docker stop step-free-api

Docker-compose deployment

version: '3'

services:

step-free-api:

container_name: step-free-api

image: vinlic/step-free-api:latest

restart: always

ports:

- "8000:8000"

environment:

- TZ=Asia/Shanghai

Render deployment

Note: Some deployment regions may not be able to connect to step. If the container logs show request timeouts or connection failures, please switch to deploy in other regions! Note: Free account container instances will automatically stop running after a period of inactivity, which may cause a 50-second or longer delay on the next request. It is recommended to check Render Container Keep-Alive

-

Fork this project to your GitHub account.

-

Visit Render and log in with your GitHub account.

-

Build your Web Service (New+ -> Build and deploy from a Git repository -> Connect the project you forked -> Select deployment region -> Choose Free instance type -> Create Web Service).

-

After the build is complete, copy the assigned domain name and append the URL to access.

Vercel deployment

Note: The request response timeout for Vercel free accounts is 10 seconds, but the API response usually takes longer, so you may encounter a 504 timeout error returned by Vercel!

Please ensure that the Node.js environment is installed first.

npm i -g vercel --registry http://registry.npmmirror.com

vercel login

git clone https://github.com/LLM-Red-Team/step-free-api

cd step-free-api

vercel --prod

Native deployment

Please prepare a server with a public IP address and open port 8000.

Please install the Node.js environment and configure the environment variables first, making sure the node command is available.

Install dependencies

npm i

Install PM2 for process monitoring

npm i -g pm2

Compile and build, you'll see the dist directory when the build is complete

npm run build

Start the service

pm2 start dist/index.js --name "step-free-api"

View real-time service logs

pm2 logs step-free-api

Restart the service

pm2 reload step-free-api

Stop the service

pm2 stop step-free-api

Recommended clients

Using the following secondary developed clients to integrate with free-api series projects is faster and simpler, supporting document/image uploads!

LobeChat developed by Clivia https://github.com/Yanyutin753/lobe-chat

ChatGPT Web developed by 时光@ https://github.com/SuYxh/chatgpt-web-sea

API List

Currently supports the /v1/chat/completions interface compatible with openai. You can use clients compatible with openai or other compatible clients to access the interface, or use online services like dify to integrate and use.

Chat Completions

Chat completions interface, compatible with openai's chat-completions-api.

POST /v1/chat/completions

The header needs to set the Authorization header:

Authorization: Bearer [refresh_token]

Request data:

{

// Model name can be filled in arbitrarily

"model": "step",

"messages": [

{

"role": "user",

"content": "Who are you?"

}

],

// Set to true if using SSE stream, default is false

"stream": false

}

Response data:

{

"id": "85466015488159744",

"model": "step",

"object": "chat.completion",

"choices": [

{

"index": 0,

"message": {

"role": "assistant",

"content": "I am StepChat, a multimodal large language model developed by StepFun. I can answer your questions, provide information and help, while supporting multimodal interactions such as text and images. If you have any questions or need assistance, please feel free to ask me."

},

"finish_reason": "stop"

}

],

"usage": {

"prompt_tokens": 1,

"completion_tokens": 1,

"total_tokens": 2

},

"created": 1711829974

}

Document Analysis

Provide an accessible file URL or BASE64_URL for parsing.

POST /v1/chat/completions

The header needs to set the Authorization header:

Authorization: Bearer [refresh_token]

Request data:

{

// Model name can be filled in arbitrarily

"model": "step",

"messages": [

{

"role": "user",

"content": [

{

"type": "file",

"file_url": {

"url": "https://mj101-1317487292.cos.ap-shanghai.myqcloud.com/ai/test.pdf"

}

},

{

"type": "text",

"text": "What does the document say?"

}

]

}

]

}

响应数据:

{

"id": "85774360661086208",

"model": "step",

"object": "chat.completion",

"choices": [

{

"index": 0,

"message": {

"role": "assistant",

"content": "这是一份关于爱情魔法的文档。它包含四个部分:\n\n1. **PMG 4.1390 – 1495**:这是一个使用面包和咒语来吸引心仪女性的仪式。仪式要求将面包分成七份,并在特定地点念诵咒语并抛洒。\n2. **PMG 4.1342 – 57**:这是一个召唤恶魔来折磨一个名叫Tereous的女性,直到她爱上并与一个叫Didymos的人结合的咒语。\n3. **PGM 4.1265 – 74**:这是一个赢得美女芳心的咒语。它要求连续三天保持纯洁,向爱神阿佛洛狄特献上乳香,并在心中默念她的神秘名字。\n4. **PGM 4.1496 – 1**:这是一个使用没药来吸引特定女性的咒语。施咒者需要在煤上焚烧没药并念诵咒语,目的是让该女性心中只想着施咒者,最终与之相爱。"

},

"finish_reason": "stop"

}

],

"usage": {

"prompt_tokens": 1,

"completion_tokens": 1,

"total_tokens": 2

},

"created": 1711903489

}

图像解析

提供一个可访问的图像URL或BASE64_URL进行解析。

此格式兼容gpt-4-vision-preview API格式,您也可以用这个格式传送文档进行解析。

POST /v1/chat/completions

header需要设置Authorization头部:

Authorization: Bearer [refresh_token]

请求数据:

{

// 模型名称可随意填写

"model": "step",

"messages": [

{

"role": "user",

"content": [

{

"type": "image_url",

"image_url": {

"url": "https://k.sinaimg.cn/n/sinakd20111/106/w1024h682/20240327/babd-2ce15fdcfbd6ddbdc5ab588c29b3d3d9.jpg/w700d1q75cms.jpg"

}

},

{

"type": "text",

"text": "图像描述了什么?"

}

]

}

]

}

响应数据:

{

"id": "85773574417829888",

"model": "step",

"object": "chat.completion",

"choices": [

{

"index": 0,

"message": {

"role": "assistant",

"content": "这张图片展示了一个活动现场,似乎是某种新产品或技术的发布会。图片中央有一个大屏幕,上面写着"创新技术及产品首发",屏幕上还展示了一些公司的标志或名称,如"RWKV"、"财跃星辰"、"阶跃星辰"、"商汤"和"零方科技"。在屏幕下方的舞台上,有几位穿着正装的人士正在进行互动,可能是在进行产品发布或演示。整个场景给人一种正式且科技感十足的印象。"

},

"finish_reason": "stop"

}

],

"usage": {

"prompt_tokens": 1,

"completion_tokens": 1,

"total_tokens": 2

},

"created": 1711903302

}

refresh_token存活检测

检测refresh_token是否存活,如果存活live为true,否则为false,请不要频繁(小于10分钟)调用此接口。

POST /token/check

请求数据:

{

"token": "267bcc81a01c2032a11a3fc6ec3e372c380eb9d1@eyJhbGciOiJIUzI1NiIsInR5cCI6IkpXVCJ9..."

}

响应数据:

{

"live": true

}

注意事项

Nginx反代优化

如果您正在使用Nginx反向代理step-free-api,请添加以下配置项优化流的输出效果,改善用户体验。

# 关闭代理缓冲。当设置为off时,Nginx会立即将客户端请求发送到后端服务器,并立即将从后端服务器接收到的响应发送回客户端。

proxy_buffering off;

# 启用分块传输编码。分块传输编码允许服务器为动态生成的内容分块发送数据,而不需要预先知道内容的大小。

chunked_transfer_encoding on;

# 开启TCP_NOPUSH,这告诉Nginx在数据包发送到客户端之前,尽可能地发送数据。这通常在sendfile使用时配合使用,可以提高网络效率。

tcp_nopush on;

# 开启TCP_NODELAY,这告诉Nginx不延迟发送数据,立即发送小数据包。在某些情况下,这可以减少网络的延迟。

tcp_nodelay on;

# 设置保持连接的超时时间,这里设置为120秒。如果在这段时间内,客户端和服务器之间没有进一步的通信,连接将被关闭。

keepalive_timeout 120;

Token统计

由于推理侧不在step-free-api,因此token不可统计,将以固定数字返回!!!!!

访问官网

访问官网 Github

Github 文档

文档 论文

论文